A reader who prefers to remain anonymous writes:

One of the areas where the pro and anti gun sides agree in many cases is the presumption that somehow mental health remedies can produce fewer deaths and injuries that result from firearms, especially mass shootings (events where large numbers of victims are involved, but the term generally applies to as few as three individuals involved). Both sides of the argument about gun rights presume that at least some answers somehow lie in “improved mental health funding and treatment.”

Much of the underpinning of this presumption relies on research and publication of articles in psychology journals and scholarly papers. But what makes anyone think these studies and papers present valid analysis and not merely opinion?

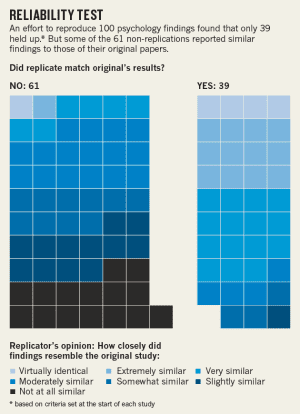

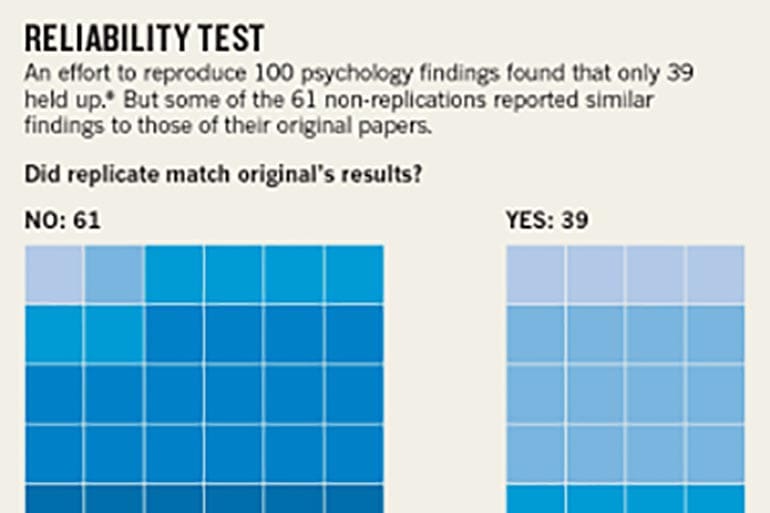

In 2015, Nature magazine published an article declaring that the results in over half of published psychology-related studies couldn’t be reproduced. “Reproducibility” is a hallmark of scientific inquiry because if a proclaimed discovery cannot be replicated, the level of skepticism regarding the validity of the “discovery” rises dramatically.

In the biggest project of its kind, Brian Nosek, a social psychologist and head of the Center for Open Science in Charlottesville, Virginia, and 269 co-authors repeated work reported in 98 original papers from three psychology journals, to see if they independently came up with the same results.

The studies they took on ranged from whether expressing insecurities perpetuates them to differences in how children and adults respond to fear stimuli, to effective ways to teach arithmetic.

Among the findings of the reproducibility study are the following:

- Only 39 of 100 replication attempts were successful

- 97% of the original research/study found significant effect

- 36% of replications found significant effect

- Average size of effect in reproductions was half that reported by the original research

The point, says Nosek, is not to critique individual papers but to gauge just how much bias drives publication in psychology. For instance, boring but accurate studies may never get published, or researchers may achieve intriguing results less by documenting true effects than by hitting the statistical jackpot; finding a significant result by sheer luck or trying various analytical methods until something pans out.

The report has some very interesting observations about the reliability of such research. Given the questionable nature of the research, we should be very careful about signing on to anti-gun activists’ proposals for so-called red flag laws or mental health screening and testing requirements for new or continued gun ownership.

Some even believe making more rules will reduce crime…😅🤣😂😅🤣😂😅🤣😂😅🤣😂😅🤣😂😅🤣🤣

If only somebody would make it illegal to kill people….

Novel idea! I bet it would work.

Never happen, not with this Congress….

investing confidence in these people would be a huge mistake….

If the concern is mass shooting, I would just recommend everyone read chapter 6 of Jordan Peterson’s book – 12 rules for life.

In fact, legislating everyone receive a copy of “12 rules for life,” would likely save more “gun violence” lives than any kind of gun control.

Legislators could give it a silly name (which is what legislators do) and call it “The Jordan Peterson Gun Violence Prevention Law.”

The JPGVPL? The Jay-pig-vee-pul is a horrible a acronym, it will never get out of committee.

I want to buy that book, but I need to finish cleaning my room first.

Nope. The vast, vast majority of “guns deaths” are gang related. America doesn’t have a “gun problem” it has a gang problem.

They count suicides where a firearm is used as a ‘gun death’.

If you were talking about murders, you’d probably be right, or close to it.

Rosignal,

You and Continental Army are both correct: the United States has a suicide AND gang problem.

There are other cultures (e.g. Japan) where suicide is more frequent than in the US. (I was going to say they have a bigger problem with suicide but that imposes the US cultural value that suicide is always bad and it should be clear that they don’t agree.)

I don’t doubt the reported analysis at all. I doubt that it’s really pertinent to the “problem” we ought to define.

Mass killers who are mentally ill and treatable are needles in a haystack. I can’t imagine any cost-effective means of reaching them. Trying to reduce gun-deaths by reducing mentally ill mass killers is a fool’s errand.

If anything is to be done on a macro scale we have to paint by the numbers. 2/3’rds of gun deaths are suicides. Is there any doubt that most of these have a mental illness intersection?

If the real problem were suicide by gunshot then the problem would be simpler. We gun people should persuade our fellows to suicide by other means. Problem solved. Oh; that’s not “IT” you say? Well, then, what is the problem we ought to be defining?

Maybe its suicide regardless of means? If that were the issue we ought to have other interest groups participate. E.g., pharmacists and pharmacy technicians, doctors, nurses. Shouldn’t we be talking about safe-storage of lethal drugs from all these people who have access to such drugs?

While closer, I think that suicide regardless of means is still the wrong problem definition. Why should anyone allow the problem definition to be just-in-time suicide-ideation detection? As many people frustrate ER docs by suiciding by means other than gunshot as those by gunshot. Isn’t it late in the game to try to diagnose suicide ideation AFTER the attempt has been made?

Maybe the real problem definition ought to be identifying individuals who are beginning to show signs – or experience symptoms – of mental illness associated with suicide? I.e., primarily depression.

If we improve public health in the area of mental hygiene then we – almost certainly – can relieve a lot of suffering from depression. This is a highly treatable disease via a number of alternative and complementary methods.

Reducing depression – and some other mental illnesses – will almost certainly reduce suicidal ideation. Then, reducing suicides is a natural collateral benefit.

We PotG should be taking the lead in shifting the conversation from suicide-by-gun to suicide-by-any-means to mental-illness to awareness of symptoms and signs of depression (and perhaps other maladies). Then, society – and in particular public heath agencies – could actually accomplish something.

Why should we imagine that public health “experts” would be intimidated by our impotent pissing and moaning? Might it be more productive to ask these experts to explain why their gun-control proposals promise more cost-effectiveness then mental hygiene efforts on depression? Will they be eager to admit that they are clueless as to how to screen->diagnose->treat depression?

If only there were a law against suicide.

Never happen, not with this Congress….

Most people commit suicide indoors. Mandate no-suicide signs on every car door and on every door to every building. And make it much more illegaler.

The reason people go on killing sprees is because a strong ever-reinforcing subconscious message is beamed out to everyone 24/7 that says: “when someone doesnt do what we want, we kill them. When we want something done, we kill people who really get in the way.” That message, although coded, is the only example set in modern global hegemony. There is no role model of ethic for people to admire, the philosophies of past have taught under novelty and trivia and the further progression of “progress” will only lead further down that same path. The same global hegemony has tricked everyone into believing that there is no god and the construction of the universe and its function is meaningless and the only comfort or gain which can be possessed is in the material world with no care or attention given to people’s choices and actions. Simply making a decision which will result in the largest gain of fiat currency is the only rational logic a person should have to consider. If you make that rationale by doing something which is considered illegal, well, tough luck, your drive made sense but you went about it all wrong, you should have been like Apple’s tax compliance officers, theyre real winners. No loyalty, swaying loyalty, to one’s self, country, god and family is perfectly normal in the pursuit of material gain. The field of psychology is a pseudo-science masquerading as an absolute science. Anyone familiar with the developmental history of psychology as an industry and the editions of the DSM understands that it is a politically-driven standard thats always changing, and has always changed to suit whatever is most expedient in service to the people that run your world. In a few years, they’ll claim some radical new paradigm and say this is more-perfect than the last, then proceed to call the world to heel. Any discussion of “mental health” without the mention that youre allowing the poor to be enlisted and sent overseas to fight in 8 simultaneous wars which the public could care less about, killing hundreds of thousands of people in their own lands without even a logical deduction as to why, without mentioning that, youre full of sh!t

Excellent comment. So in addition to suicide and gangs, we have a leadership / paradigm problem.

Someone on this forum is awake…..

At a tax preparer conference, I had three psychiatrists tell me they can defend every “normal” action/thought as being mental illness. It was why they enjoyed the game so much.

That’s just crazy enough to be true.

The only thing it might really help with are suicides by firearm. If someone has his/her guns taken away, they might do the deed like Thelma and Louise. It is the act, not the tool.

You could say, “it’s the fool, not the tool.”

“Killing is a matter of will, not weapons.

You cannot control the act itself

by passing laws about the means employed.”

The late Col Jeff Cooper, 1958

Shrinks are more mentally unbalanced than anybody. Sending people to a shrink makes very little sense in most cases.

The CDC reports that in 2016 firearms accounted for 51% of all US suicides…what are the hoplophobes doing about the other 49%…oh yeah…nothing…because…guns!

Focusing on the tool employed in suicides is counterproductive. Investing in viable mental health research is a good idea.

Yeah… we need many, many, more highly paid highly paid mental health professionals. You know, the ones who are trying to put half of all American school age boys on Ritalin, Dexedrine, and Adderall to keep them from being boys, we might get somewhere.

More “mental health” spending is 99% about financing the lives of reliable demtard donors/voters (pshrinks).

Welfare mammies don’t donate to political campaigns, overpaid pshrinks do.

This is why they want “red flag” laws. Happens all the time. People who can’t do their own job angle for a field-promotion, surpervising other people doing what they can’t.

“People who can’t do their own job angle for a field-promotion, surpervising other people doing what they can’t.”

It’s called “The Peter Principle”; there was a whole book about it.

Their treatments are wrong, but their flags will be right.

There are another couple of huge indicators of how corrupt and politicized the field of psychology is.

Let’s go back 1971 and the “Stanford Prison Experiment” (which I’ll call “SPE” from here on in). People who haven’t heard of this “study” can look it up on the ‘net. It’s well documented in psych book after psych book.

The professor who carried out this experiment was one Phillip Zimbardo. He’s been given an achievement award by the APA, was president of the organization for 12-odd years. Sounds like a big wheel in the psychology field, right?

Trouble is, the SPE was poorly design (as an experiment) and Zimbardo was not an objective observer. He took active part in “running the prison.”

Students who were involved in the SPE have come out to tell their role in this “experiment” and it turns out that it was mostly a sham:

https://medium.com/s/trustissues/the-lifespan-of-a-lie-d869212b1f62

It’s a long article, but worth the read, because it gives you an insight into how much of “social pysch” is a lie with an agenda.

Want to see more scandals in psych? Google “Diederik Stapel ” and be prepared to be amazed at a level of scientific & academic fraud that will boggle your mind.

If nothing else that study did show how quickly typical college kids can take to running a gulag to a proper Soviet standard

While the article makes a valid point it also misses one. There is nothing unscientific about the studies mentioned in the article. They were published specifically so that they could be peer reviewed and replicated, which they were. The fact that the outcomes were not reproducible means one of four things. The hypothesis in the original study was wrong, the original study was conducted improperly, the attempt at replication was conducted improperly or one or both of the studies hit a statistical outlier which isn’t yet understood. Further attempts at reproduction of the original studies should determine which is the case. That’s how this kind of thing works.

This does however lead to some other things to take note of.

First and foremost, while relatively rare, there are unscrupulous scientists just as there are unscrupulous people in any large group of humans. Then there are mistakes and unseen biases as well. All of these things are reasons why science uses peer review and replication to confirm results. Psychology and psychiatry are places where bias is a serious problem and very, very difficult to eliminate due to the nature of the work.

Both disciplines are also difficult in general because no two people truly think alike. Whether you lean towards the nature or nurture way of thinking it’s impossible to deny that people are shaped by their past experiences to some degree meaning that a truly controlled study is pretty much impossible.

Secondly there is an implicit bias imparted on almost all science due to the way that it’s funded. “Interesting” and “PC” studies get grants while others don’t. This creates pressure on researchers to find things that are attractive to those giving the grant money. Even the most brilliant scientists have to eat and pay bills.

You can see some of this effect in “climate science” research. If you were to ask a serious scientist “What is science?” their explanation would involve “experiments”. “Climate science” has no experiments but rather has models. If you were to hand a serious scientist what that person knows to be an incomplete data set they would tell you that any conclusion drawn from it is questionable. If you were to go a step farther and hand them a data set where you’ve included “guesses that we penciled in” they would toss it back to you and tell you it’s useless because you’ve tainted the data. Neither of these things happens in “climate science” even when you’re dealing with an actual physicist or chemist because to say such a thing is political heresy. If it were known that someone held such views it might make it much harder for them to get grants. Asking for a grant to study the hypothesis that, say, climate change is caused by natural cycles of the Sun and Earth which humans have a limited impact on? Almost no one would even bother to ask for that grant because they know it wouldn’t just not get them the grant, asking for such a grant would likely get them blacklisted and even if they did manage to get the research done they’d probably never get published.

Finally, how we think and even the actual physical and chemical workings of the brain are not well understood at this point. That doesn’t mean we should give up obviously but it does mean that we should take findings with a grain of salt since it may not even be possible to know if a conclusion is correct or not. Such an issue has existed in most scientific disciplines at one point or another.

Some of this is a problem based on methods which, in terms psychology and psychiatry, could be solved fairly easily. It just wouldn’t be ethical to do so. For example, we know people change their behavior when they’re aware that they are being observed. However, it’s unethical to do experiments on people without their knowledge. Hence we know that many psychology studies have questionable results because they were conducted on knowing subjects. How those people would act under circumstances where they didn’t know/think they were being observed isn’t something we can know or comment on.

strych9,

Since we are talking about science, what evidence do you have that unscrupulous scientists are “relatively rare” compared to other vocations? Unscrupulous behavior is simply the result of someone who does not care about morals/standards and there is no reason why such moral decay would be less prevalent in scientists than any other group of people. And you even speak to this point later in your comment when you state that many/most climate scientists are unscrupulous.

If I had a dollar for every time that bias grossly skewed some science idea in the past decade, I would be a multi-billionaire.

Let’s be honest. Humans are, by-and-large, selfish and greedy. Period. It doesn’t matter how they earn a living. Scientists are just as likely to be selfish and greedy as anyone else. And, just like everyone else, if there is a profit motive in something, scientists will take it.

Note that profit motive would include gain — such as fame, fortune, pleasure — weighed against possible risks — such as financial costs, hits to reputation, loss of family members, possible prison time, etc.

The only thing standing between anyone’s sheer devotion to selfishness and greed is a higher calling to noble standards. If a person does not answer the call to those noble standards, they will take whatever they can get away with. And that includes scientists.

Great analysis, Strych.

I also think that, given that the current wave of legislation is seemingly being driven by a small handful of mass shootings, what everyone is dealing with is a data set that is in most cases only forensics after the fact, because mass shooters usually either kill themselves or die at the scene. So they are usually not interviewable for the most part. All anyone can do is go back after the fact and try to piece together timelines and data from various sources. Or autopsy their brain or do blood toxicology tests in hope that some kind of piece of information will shed light.

Personally I remain concerned about the conflation between mass shooting events and mental health. I actually feel concerned that by going in a wrong direction with this, people who really do need mental health will end up not getting it because the premise was wrong from the beginning.

You feel concerned about “Conflation between mass shooting events and mental health”? Do you seriously suggest that perfectly mentally healthy (whatever that might mean) person goes and murders dozens of strangers for no apparent reason?

“…people who really do need mental health will end up not getting it…” Don’t we all need mental health? ;-p

Regardless how many journal articles you author or how much your peers applaud you, the poor bastard you were manipulating to achieve your desired fame is still just as dangerous to his neighbors as he was when you started, no more, no less, you did not accomplish *anything*. It’s like an entire class of professionals, hundreds of thousands of them, who make the big bucks by sitting around in a circle jerking off. Absolutely nothing accomplished.

That’s described also in Thomas Kuhn’s “The Structure of Scientific Revolutions.” Net: There’s accepted wisdom, acceptable positions, and mutually reinforcing credibiliy in “science” as done by people: social credibility steers grants, professional advancement, and even the chance to be heard.

Kuhn argues that the “paradigm” for any field stays stuck until enough reality-pressure (My term.) builds to knock it out of the piror “paradigm.” Then you have a “revolution”.

Interestingly, Kuhn, back in the day, was bemoaning the structural impediments to what we’d call “progressive” interpretations and frames of reference these days. He ignores choosing frames of reference to try to create a particular reality. Soviet “acceptable’ science chosen for how it supported the society to come, and The New Soviet Man comes to mind. A lot of people starved because agricultural science and practice chosen because it drove toward the glorious imagined future didn’t work so well for making food. Similar with aspects of genetics and psychology.

These days the situation pretty much invert’s what Kuhn described: it’s all progressive, all the time, and noting that legal gun ownership does not go with violent crime is Not Permitted. Indeed, legal gun ownership in the US is negatively correlated with violence, crime, and similar.

When people have a problem, often the only solution they can come up with is to throw money at it. It’s as true in this as it is in business and politics, and rarely solves the problem.

They throw Other People’s Money at the issue. Totally different idea.

One has to question the mental health of people who firmly believe disarming law abiding people will prevent criminal acts. They use the worn argument of gun theft to justify it while ignoring the facts about LEO gun loss and the reality about the number of weapons already floating around.

It’s enough to drive you crazy.

The owl told me there’s no hope for them humans, everywhere they go they make problems.

Mental health is whatever the seal of authority says it is. Posters on this forum have said that believing in conspiracy theories is a mental illness, even when they’re not conspiracy theories. Do we really want to allow a subjective set of disorders, disorders that cannot be ruled out or confirmed by any lab tests or imaging, disorders 100% based on the opinions of state “experts” to be a decisive factor in determining our individual rights? The Soviets used “mental illness” to lock up political opponents….guess that couldn’t happen here….

We need to stop pushing dumb narratives. We need to stop saying “arm teachers”. Yes- give the people telling your kids there are 27 genders guns.

We need to stop saying it’s a mental health issue. Remember the psych majors in college? No wonder there’s a replication crisis. Give doctors more power? Yes – let’s get rid of due process and allow a Jewish psychiatrist to put your wife on Prozac and your son on Ritalin and take away your guns. I was in church recently and we were singing “if today your year God’s voice, harden not your heart…”. Now you get a Respidone Rx.

We need to stop saying “enforce current laws”. Currently, you loose your guns rights forever for misdemeanor domestic violence. Anyone who has been through a divorce knows this is thrown around falsely. Thank you boomer-demigod Ronald Reagan for giving us abortions and no-fault divorce in California.

We need to repeal the 19th, silence the lyingpress, and embrace a culture of natalism. Subsidies for families that have kids. Then we can keep our guns. Only then.

Merry Christmas!

Only anti gun rights advocates call it ‘arming teachers’. No one in his right mind calls for issuing guns to every school employee. We only want to stop preventing concealed carriers from carrying their guns – something they are already doing every day everywhere else – in schools.

And what does the psychiatrist being Jewish have to do with anything?

Spending does nothing. There is not a direct correlation between spending and results. The locations that spend the most on education don’t have the top performance. The loony shooters are often known and medicated. You usually don’t here how they were nice, kind, seemingly balanced individuals who just did a terrible thing that was completely out of character.

But to maintain a healthy state, our body needs much more. Personally, CBD oil https://dailycbd.com/en/best-cbd/ helps me a lot. My reliable remedy for many symptoms

It is really sad how many people are scared of firearms. But, I get it, I think a lot of us get it. It has to do with the underlying pressure of anxiety we feel in this and other countries. But, anxiety can not be cured by pills. Pills are a cover for that. Talking to people with the intent of repairing the nervous system is the way to go. look at it this way, when my son was younger, and he wanted something at the store if I said “no”, he would cry about it. Now, if I explain to him in as best a way with detail as to why he can not have something, well, he got over it much faster. People are like that. Now, some just do not care and will impose their fears onto others. Now, I am not saying their fears are invalid, to a small degree. https://unabis.com/cbd-blog/what-is-full-spectrum-cbd-oil/

I advise you to use the services of the excellent site https://hgh-therapy-rx.com/, where you can get qualified help, improve your mental and physical health and start living a full life.

To maintain health, I would like to introduce you to hormone therapy. You can read more about different brands, services and other things on the website https://hrtcure.com

As you get older growth hormone decreases causing many health issues such as low metabolism, weight gain, depression, and hair loss. This post is helpful in research of the future to find new help for aging problems https://usapeptides.info/gh-releasing-hormone-for-purchase-here/

Comments are closed.