Long after Breakfast at Tiffany’s and before the A-Team, George Peppard played freelance investigator Thomas Banacek for two seasons on television. We loved the show. Banacek used get involved with a convoluted case, then quote some Polish proverb to explain his solution process. I have no idea if any of these proverbs were real, but I always remember this one, “If you’re not sure that it’s potato borscht, there could be children working in the mines,” and his translation, “What you don’t know can hurt you a lot.” Many discussions of gun rights vs gun control lead to a citation of a statistic, or a series of statistics in support of the argument. The first thing to consider is not whether these statistics support your worldview, but whether they reflect reality. Why? Because what you don’t know can hurt you a lot. Or the non-Polish corollary, it isn’t what we don’t know that gives us trouble, it’s what we know that ain’t so.

First consider the methodology behind the statistics. Good pollsters know that bad questions can skew the results:

The wording of a question is extremely important. We are striving for objectivity in our surveys and, therefore, must be careful not to lead the respondent into giving the answer we would like to receive. Leading questions are usually easily spotted because they use negative phraseology. As examples:

Wouldn’t you like to receive our free brochure?

Don’t you think the Congress is spending too much money?

Pollsters with an agenda thrive on leading and loaded questions, and know that the answer to a question can sometimes be influenced by the preceding questions. So if I wanted more no answers to a question about gun control, I’d probably ask questions about home defense first. If I wanted more yes answers, I’d probably ask about murder/suicides and spree killings beforehand.

The extreme form of leading questions are push polls, as described on Wikipedia:

Perhaps the most famous use of push polls is in the 2000 United States Republican Party primaries, when it was alleged that George W. Bush’s campaign used push polling to torpedo the campaign of Senator John McCain. Voters in South Carolina reportedly were asked “Would you be more likely or less likely to vote for John McCain for president if you knew he had fathered an illegitimate black child?” The poll’s allegation had no substance, but was heard by thousands of primary voters. McCain and his wife had in fact adopted a Bangladeshi girl.

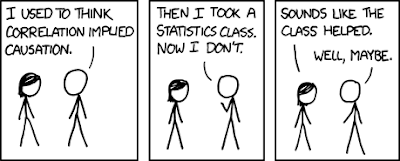

We have often read that greater ownership of guns corresponds with less crime in a given place over a given period. Therefore, we are told, ipso facto More Guns = Less Crime. We’ve also read the opposite.

Ipso facto is Latin for, “by that fact itself.” The classic logical fallacies are also expressed in Latin, one of them being post hoc ergo propter hoc. That means, “after this, therefore because of this.” Let’s look at some examples that logical fallacy:

After Brad Kozak practiced at the gun range, he started shooting more accurately, therefore his range practice made him a better shot.

After Brad Kozak started blogging at TTAG, more people started reading TTAG, therefore Brad’s writing led to TTAG’s increased readership.

After Brad Kozak joined the NRA, the NRA endorsed Harry Reid, therefore Brad’s joining influenced the NRA’s endorsement.

Although the first seems likely, the second possible and the third absurd, none of these statements are intrinsically logically sound because they don’t prove causation – only correlation. Brad may have also had corrective eye surgery, or gotten a better weapon to become a better shot. People may be reading TTAG because of other writers. And the NRA probably didn’t consider Brad’s feelings when they endorsed Reid. When given such statisics, we need to know whether the statistician eliminated other possible causes and established a clear connection between the events.

There’s another problem with statistics – we don’t understand the math very well. Suppose you’re watching someone’s dog. They tell you that Fido barks constantly when hungry, but when you feed him well, he barks less five percent of the time. When Fido is barking, what is the likelihood that he is hungry?

I put the answer at the end of this blog. I barely remember Prob/Stat, but this seems like an easy puzzle. We’re given 100% barking when hungry and only 5% otherwise, so if Fido is barking it seems obvious that there is 95% probability that he is hungry rather than just ornery. Right?

Odds Are, It’s Wrong. Science fails to face the shortcomings of statistics

It’s science’s dirtiest secret: The “scientific method” of testing hypotheses by statistical analysis stands on a flimsy foundation. Statistical tests are supposed to guide scientists in judging whether an experimental result reflects some real effect or is merely a random fluke, but the standard methods mix mutually inconsistent philosophies and offer no meaningful basis for making such decisions. Even when performed correctly, statistical tests are widely misunderstood and frequently misinterpreted. As a result, countless conclusions in the scientific literature are erroneous, and tests of medical dangers or treatments are often contradictory and confusing.

Replicating a result helps establish its validity more securely, but the common tactic of combining numerous studies into one analysis, while sound in principle, is seldom conducted properly in practice.

Experts in the math of probability and statistics are well aware of these problems and have for decades expressed concern about them in major journals. Over the years, hundreds of published papers have warned that science’s love affair with statistics has spawned countless illegitimate findings. In fact, if you believe what you read in the scientific literature, you shouldn’t believe what you read in the scientific literature.

“There is increasing concern,” declared epidemiologist John Ioannidis in a highly cited 2005 paper in PLoS Medicine, “that in modern research, false findings may be the majority or even the vast majority of published research claims.”

Ioannidis claimed to prove that more than half of published findings are false, but his analysis came under fire for statistical shortcomings of its own. “It may be true, but he didn’t prove it,” says biostatistician Steven Goodman of the Johns Hopkins University School of Public Health. On the other hand, says Goodman, the basic message stands. “There are more false claims made in the medical literature than anybody appreciates,” he says. “There’s no question about that.”

Nobody contends that all of science is wrong, or that it hasn’t compiled an impressive array of truths about the natural world. Still, any single scientific study alone is quite likely to be incorrect, thanks largely to the fact that the standard statistical system for drawing conclusions is, in essence, illogical. “A lot of scientists don’t understand statistics,” says Goodman. “And they don’t understand statistics because the statistics don’t make sense.”

And what about Fido?

Answer: That probability cannot be computed with the information given. The dog barks 100 percent of the time when hungry, and less than 5 percent of the time when not hungry. To compute the likelihood of hunger, you need to know how often the dog is fed, information not provided by the mere observation of barking.

OK, that makes sense. If I rarely feed Fido, he’ll probably be barking almost 100% of the time because he’s hungry, and he always barks when he’s hungry. So my 95% guess would be close.

But if I always keep Fido well fed, he’ll only be barking about 5 or 10% of the time, and rarely because he’s hungry. So my 95% guess would be dead wrong. And sometimes—especially when you use stats effectively to justify a position on gun control—being dead wrong can get you dead.

The other problem with medical studies is that often times the nature of the study is misstated either to make it simpler to understand, or to conceal the agenda of the person presenting the "statistics" to be studied.

How many times have you been watching the news and seen a chirpy newsreader say something like this: "Drinking coffee may lead to greater intelligence" or "that blueberry muffin you just ate may be more harmful than cyanide."

The news person then goes on to say that some study has shown a greater instance of A compared to B, or an advocacy group has warned about the dangers of This compared to That. By the end of the story, you realize you've been hoodwinked because the study doesn't really show that eating flowers will help you avoid cancer of the eardrum or whatever it supposedly showed, rather, the lay-people interpreting a scientific study have extrapolated the actual data to the point where their assertions have no basis in fact.

I call this "cantilevered reasoning." You start out with one fact, then you make an assumption based on that fact. Then, assuming your first assumption is correct, you make another assumption. Then a third assumption based on the assumption that the first two assumptions are true. By the time you get to the typical "news story" or politcal argument, you are quoting "data" that is about as reliable as tea-leaf reading, throwing the I-Ching, or just flipping a coin and making a wild-ass guess.

Donal – great post…but I can assure you your third one is wrong – the NRA could care less what I think (or apparently a majority of it's members)…it's that "inside the beltway" thinking rearing it's ugly head.

One of the most entertaining reads I've found that deal with statistics and data was by Michael Crichton – "State of Fear." The cool thing about the book was that, for every study or paper he used in the book, he provided footnoted references. His premise was that an unofficial cabal of the media, special-interest groups, research scientists and politicians regularly use stats to further their own goals – leading to junk science and bad laws.

My dad used to drive me nuts when I was a kid, forcing me to think through marketing claims on TV. "Why would you want a 10 foot tall washing machine?" he ask, after watching a commercial for Dash? "Would it clean clothes better because it's tall? What are they claiming here? What are they implying?" Basically, he taught me how to think objectively, using reason and logic – and gave me an inherent distrust of taking stats and studies at face value.

Would that everybody's parents would do the same for them. Perhaps then we could avoid snake oil pitches like those for healthcare and climate change.

LOL… so you grew up to to become a marketing guru!

If you can't beat 'em, join 'em?

Sorry for the long postl, I apologize in advance.

I used to be very into the statistics of gun control and would love to debate (and usually won, it is a lot easier when the facts are on your side and you quote CDC (Center for Disease Control) and NAS (National Accedemy of Sciences)) . However I was in error in doing so. If I may quote someone who is far better than me with words John Ross, the author of "Unintended Consequences"

THEY SAY: “If we pass this License-To-Carry law, it will be like the Wild West, with shootouts all the time for fender-benders, in bars, etc. We need to keep guns off the streets. If doing so saves just one life, it will be worth it.”

WE SAY: “Studies have shown blah blah blah” (FLAW: You have implied that if studies showed License-To-Carry laws equaled more heat-of-passion shootings, Right-To-Carry should be illegal.)

WE SHOULD SAY: “Although no state has experienced what you are describing, that’s not important. What is important is our freedom. If saving lives is more important than the Constitution, why don’t we throw out the Fifth Amendment? We have the technology to administer an annual truth serum session to the entire population. We’d catch the criminals and mistaken arrest would be a thing of the past. How does that sound?”

Here is the link http://web.archive.org/web/20070115025334/www.joh…

The above link is from his archives his current link is http://www.john-ross.net/inrange.php

Best wishes

NukemJim

Sorry for such a long post

Comments are closed.